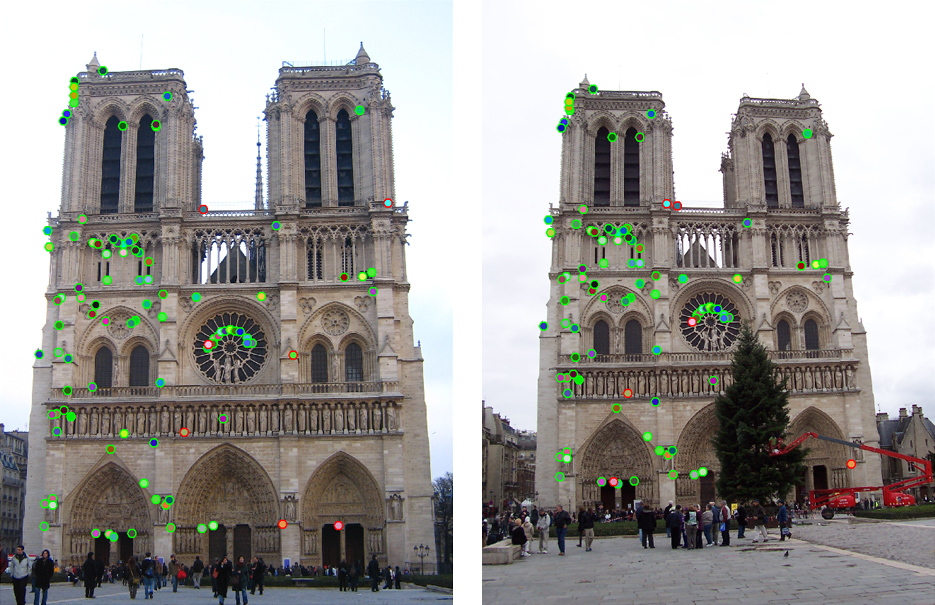

The top 100 most confident local feature matches from a baseline implementation of project 2. In this case, 93 were correct (highlighted in green) and 7 were incorrect (highlighted in red).

Project 2: Local Feature Matching

CSCI 1430: Introduction to Computer Vision

Brief

- Due: 9:00pm on Friday, 24th February, 2016

- Project materials including writeup template proj2.zip (6.9 MB).

- Additional scenes to test on extra_data.zip (194 MB).

- Handin: through $ cs1430_handin proj2

- Required files: README, code/, html/, html/index.html

Overview

The goal of this assignment is to create a local feature matching algorithm using techniques described in Szeliski chapter 4.1. The pipeline we suggest is a simplified version of the famous SIFT pipeline. The matching pipeline is intended to work for instance-level matching—multiple views of the same physical scene.

Details

For this project, you need to implement the three major steps of a local feature matching algorithm:

- Interest point detection in

get_interest_points.m(see Szeliski 4.1.1) - Local feature description in

get_features.m(see Szeliski 4.1.2) - Feature Matching in

match_features.m(see Szeliski 4.1.3)

There are numerous papers in the computer vision literature addressing each stage. For this project, we will suggest specific, relatively simple algorithms for each stage. You are encouraged to experiment with more sophisticated algorithms!

Interest point detection (get_interest_points.m)

You will implement the Harris corner detector as described in the lecture materials and Szeliski 4.1.1. See Algorithm 4.1 in the textbook for pseudocode. The starter code gives some additional suggestions. You do not need to worry about scale invariance or keypoint orientation estimation for your baseline Harris corner detector.

Local feature description (get_features.m)

You will implement a SIFT-like local feature as described in the lecture materials and Szeliski 4.1.2. See the placeholder get_features.m for more details. If you want to get your matching pipeline working quickly (and maybe to help debug the other algorithm stages), you might want to start with normalized patches as your local feature.

Feature matching (match_features.m)

You will implement the "ratio test" or "nearest neighbor distance ratio test" method of matching local features as described in the lecture materials and Szeliski 4.1.3. See equation 4.18 in particular.

Using the starter code (proj2.m)

The top-level proj2.m script provided in the starter code includes file handling, visualization, and evaluation functions for you, as well as calls to placeholder versions of the three functions listed above. Running the starter code without modification will visualize random interest points matched randomly on the particular Notre Dame images shown at the top of this page. The correspondence will be visualized with show_correspondence.m and show_correspondence2.m (you can comment one or both out if you prefer).

For the Notre Dame image pair there is a ground truth evaluation in the starter code, as well. evaluate_correspondence.m will classify each match as correct or incorrect based on hand-provided matches (see show_ground_truth_corr.m for details). The starter code also contains ground truth correspondences for two other image pairs (Mount Rushmore and Episcopal Gaudi). You can test on those images by uncommenting the appropriate lines in proj2.m. You can create additional ground truth matches with collect_ground_truth_corr.m (but it's a tedious process).

As you implement your feature matching pipeline, you should see your performance according to evaluate_correspondence.m increase. Hopefully you find this useful, but don't overfit to the initial Notre Dame image pair which is relatively easy. The baseline algorithm suggested here and in the starter code will give you full credit and work fairly well on these Notre Dame images, but additional image pairs provided in extra_data.zip are more difficult. They might exhibit more viewpoint, scale, and illumination variation. With careful consideration of the qualities of the images on display, you should be able to match more difficult image pairs.

Suggested implementation strategy

It is suggested that you implement the functions in this order:

- First, use

cheat_interest_points()instead ofget_interest_points(). This function will only work for the 3 image pairs with ground truth correspondence. This function cannot be used in your final implementation. It directly loads the 100 to 150 ground truth correspondences for the test cases. Even with this cheating, your accuracy will initially be near zero because the features are random and the matches are random. - Second, implement

match_features(). Accuracy should still be near zero because the features are random. - Third, change

get_features()to cut out image patches. Accuracy should increase to ~40% on the Notre Dame pair if you're using 16x16 (256 dimensional) patches as your feature. Accuracy on the other test cases will be lower (Mount Rushmore 25%, Episcopal Gaudi 7%). Image patches aren't a great feature (they're not invariant to brightness change, contrast change, or small spatial shifts) but this is simple to implement and provides a baseline. - Fourth, finish

get_features()by implementing a SIFT-like feature. Accuracy should increase to 70% on the Notre Dame pair, 40% on Mount Rushmore, and 15% on Episcopal Gaudi. These accuracies still aren't great because the human-selected correspondences might look quite different at the local feature level. If you're sorting your matches by confidence (as the starter code does inmatch_features()) you should notice that your more confident matches (which pass the ratio test more easily) are more likely to be true matches. - Fifth, stop cheating and implement

get_interest_points(). Harris corners aren't as good as ground-truth points (which we know to correspond), so accuracy may drop. On the other hand, you can get hundreds or even a few thousand interest points, so you have more opportunities to find confident matches. If you only evaluate the most confident 100 matches (see thenum_pts_to_evaluateparameter) on the Notre Dame pair, you should be able to achieve 90% accuracy. As long as your accuracy on the Notre Dame image pair is 80% for the 100 most confident matches, you can receive full credit for the project.

You will likely need to do extra credit to get high accuracy on Mount Rushmore and Episcopal Gaudi.

Potentially useful MATLAB functions: imfilter(), fspecial(), bwconncomp(), colfilt(), sort(), atan2().

Forbidden functions you can use for testing, but not in your final code: gradient(), extractFeatures(), detectSURFFeatures(), matchFeatures(), and similar..

Writeup

For this project, and all other projects, you must do a project report in HTML. We provide you with a placeholder .html document which you can edit. In the report you will describe your algorithm and any decisions you made to write your algorithm a particular way. Then you will show and discuss the results of your algorithm.

For this project specifically, show how well your matching method works not just on the Notre Dame image pair, but on additional test cases. For the 3 image pairs with ground truth correspondence, you can show eval.jpg which the starter code generates. For other image pairs, there is no ground truth evaluation (you can make it!) so you can show vis_dots.jpg or vis_arrows.jpg instead. A good writeup will assess how important various design decisions were. E.G., by using SIFT-like features instead of normalized patches, I went from 70% good matches to 90% good matches. This writeup explanation is especially important if you completed some of the extra credit challenges. You should clearly demonstrate how your additions changed the behavior on particular test cases.

Extra Credit

Students taking CSCI 1430 as a capstone are required to do 10 points worth of extra credit from the suggestions below. Extra credit beyond that can increase your grade over 100. The max score for all students is 110.

For all extra credit, be sure to include quantitative analysis showing the impact of the particular method you've implemented. Each item is "up to" some amount of points because trivial implementations may not be worthy of full extra credit.

Interest point detection extra credit:

- up to 5 pts: Try detecting keypoints at multiple scales or using a scale selection method to pick the best scale.

- up to 5 pts: Try estimating the orientation of keypoints to make your local features rotation invariant.

- up to 5 pts: Try the adaptive non-maximum suppression discussed in the textbook.

- up to 10 pts: Try an entirely different interest point detection strategy like that of MSER. If you implement an additional interest point detector, you can use it alone or you can take the union of keypoints detected by multiple methods.

Local feature description extra credit:

- up to 3 pts: The simplest thing to do is to experiment with the numerous SIFT parameters: how big should each feature be? How many local cells should it have? How many orientations should each histogram have? Different normalization schemes can have a significant effect, as well. Don't get lost in parameter tuning, though.

- up to 5 pts: If your keypoint detector can estimate orientation, your local feature descriptor should be built accordingly so that your pipeline is rotation invariant.

- up to 5 pts: Likewise, if you are detecting keypoints at multiple scales, you should build the features at the corresponding scales.

- up to 5 pts: Try different spatial layouts for your feature (e.g. GLOH).

- up to 10 pts: Try entirely different features (e.g. local self-similarity).

Local feature matching extra credit:

An issue with the baseline matching algorithm is the computational expense of computing distance between all pairs of features. For a reasonable implementation of the base pipeline, this is likely to be the slowest part of the code. There are numerous schemes to try and approximate or accelerate feature matching:

- up to 10 pts: Create a lower dimensional descriptor that is still accurate enough. For example, if the descriptor is 32 dimensions instead of 128 then the distance computation should be about 4 times faster. PCA would be a good way to create a low dimensional descriptor. You would need to compute the PCA basis on a sample of your local descriptors from many images.

- up to 5 pts: Use a space partitioning data structure like a kd-tree or some third party approximate nearest neighbor package to accelerate matching.

Writeup Questions

Please answer these in your write-up.- What characteristics should good feature detectors have? Consider the tradeoff between invariance and discriminative power.

- What do the eigenvalues of the 'M' second moment matrix represent?

- What is a good method for feature descriptor matching and why?

Rubric

- +30 pts: Implementation of Harris corner detector in

get_interest_points.m - +40 pts: Implementation of SIFT-like local feature in

get_features.m - +10 pts: Implementation of "Ratio Test" matching in

match_features.m - +20 pts: Writeup, answering the three questions above, with several examples of local feature matching.

- +10 pts: Extra credit (up to ten points).

- -5*n pts: Lose 5 points for every time (after the first) you do not follow the instructions for the hand in format

Web-Publishing Results

The professor and TAs will select impressive projects to be highlighed in class and on the course website. If you do not want your results published to the web, you can choose to opt out. If you want to opt out, then please email the instructor.

Hand-in process

We will be conducting anonymous TA grading, so please don't include your name or ID in your writeup.

The hand-in structure is very important as you will lose points if you do not follow instructions. Every time after the first that you do not follow instructions, you will lose 5 points. The folder you hand in must contain the following:

- README - text file containing anything about the project that you want to tell the TAs but not anyone else (e.g., if your project is selected to be published on the webpage).

- code/ - directory containing all your code for this assignment.

- html/ - directory containing all your html report for this assignment (including images). Only this folder will be published to the course web page, so your webpage cannot contain pointers to images in other folders of your handin.

- html/index.html - Web page for your results

Hand in your project through using the `cs1430_handin' script. Navigate to your completed homework directory structure (e.g., ~/CS1430/Proj2/), then call cs1430_handin from the terminal. Choose proj2. You should receive an email confirming your handin.

Credits

Assignment developed by James Hays.